Nvidia’s Chips Became the World’s Weirdest Diplomatic Tool

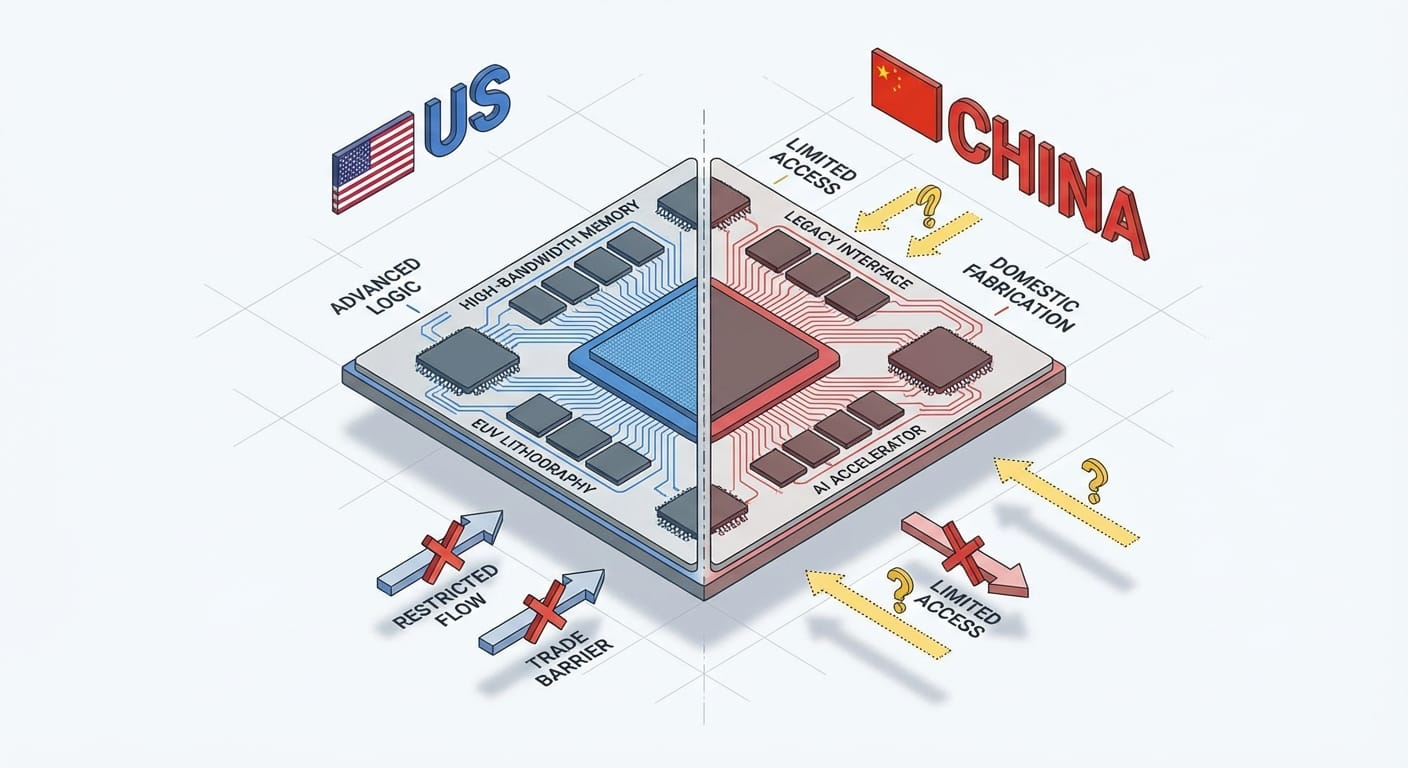

US–China AI competition in 2026 is increasingly a chip-and-infrastructure battle, with Nvidia exports acting like a diplomatic throttle. The US still leads in frontier chips and models, while China’s advantage is fast diffusion and massive-scale deployment.

The hottest US–China “AI diplomacy” right now isn’t happening in a conference room. It’s happening in shipping manifests, export license meetings, and the awkward gap between “we’re easing trade tensions” and “we’re still not letting you buy the good GPUs.”

And if you’re building anything that touches AI—products, infrastructure, startups, even internal tooling—this isn’t just geopolitics-as-entertainment. It’s supply chain reality.

Caption: When GPUs become policy, the supply chain starts feeling… personal.

So what’s the actual problem?

Here’s the thing: the US and China aren’t arguing about “AI” in the abstract. They’re arguing about who gets to scale frontier AI—and scaling frontier AI means advanced chips, massive data centers, and the ability to train and deploy models faster than the other guy.

In early 2026, a few tensions are all colliding at once:

- Military + economic rivalry is driving a “prisoner’s dilemma” dynamic—both sides accelerate because slowing down feels like losing. [1]

- Export controls keep changing—tightened, loosened, reworded, re-tightened—especially around Nvidia’s high-end GPUs. [4] [6]

- The US still leads in top-end chips and many frontier closed models, but China is scary good at diffusion: getting AI into products, factories, drones, robots, and everyday apps at scale. [4] [6]

The result: what some analysts call “AI bipolarity”—two massive ecosystems competing, influencing the rest of the world, and pulling supply chains in different directions. [4] [6]

Zoom out: why Nvidia is at the center of this

Let’s be real: Nvidia didn’t ask to become a geopolitical chess piece, but their GPUs ended up being the “keys” to the modern AI kingdom.

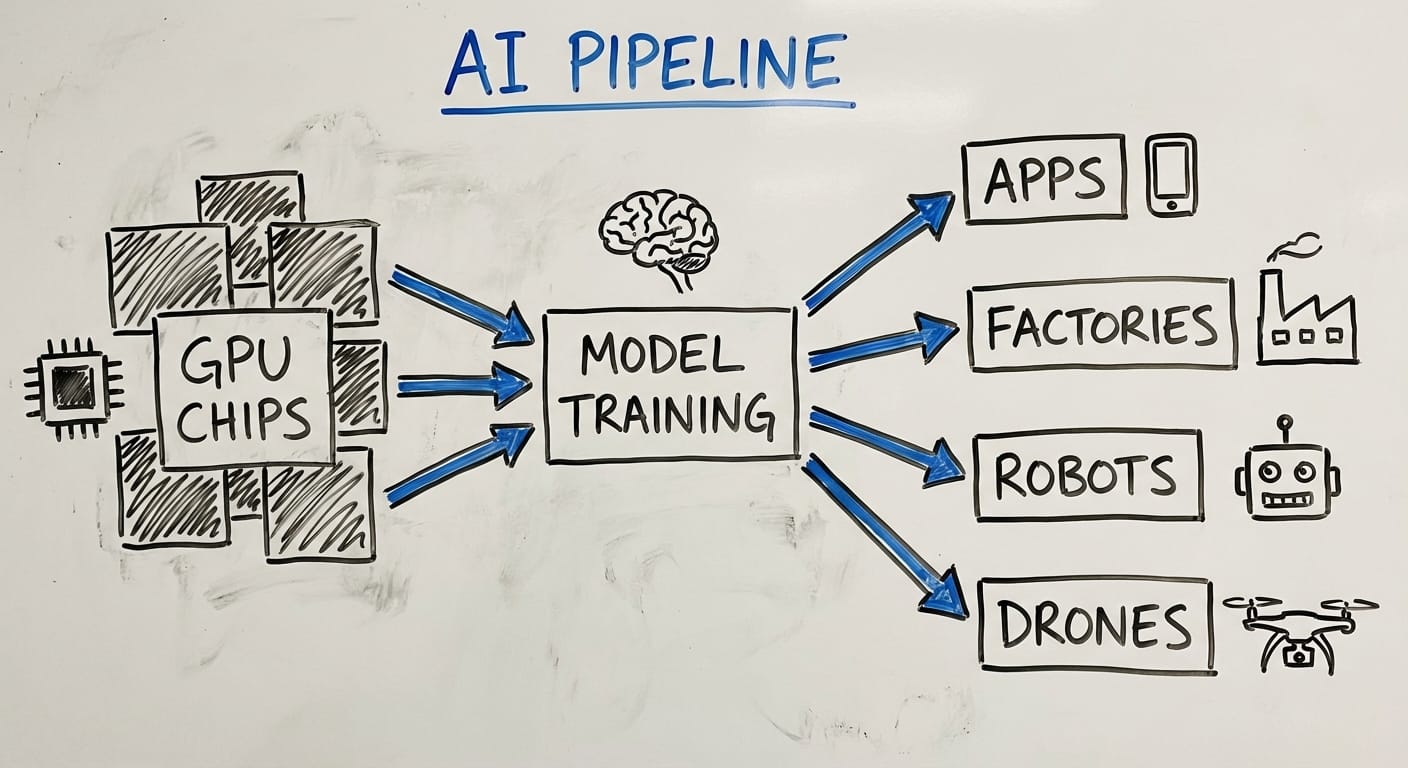

High-end accelerators (think H100/A100-class capability) are the difference between:

- training a frontier model on a competitive timeline, vs.

- being stuck optimizing around shortages, using older chips, or getting creative with distributed training.

That’s why US controls on advanced chip exports are designed to slow China’s ability to train frontier models—without completely detonating global business relationships. And yes, that balance has been… messy. [4] [6]

Caption: The GPU is the new oil barrel. Less dramatic, more expensive.

Deep dive: what each side is optimizing for

Here’s what most people miss: the US and China aren’t playing identical games.

The US playbook (frontier + monetization)

- Keep the lead in advanced chips and cutting-edge model training.

- Push closed-source frontier systems aimed at AGI-adjacent capability (think OpenAI, DeepMind). [4]

- Use alliances (like supply chain security efforts sometimes described as “Pax Silica”) to protect access to manufacturing and materials. [6]

- Deal with a very unsexy bottleneck: power and infrastructure for data centers. Yes, electricity is now a competitive advantage. [4] [6]

China’s playbook (diffusion + scale)

- Ship applied AI everywhere—consumer apps, industrial systems, robotics, drones.

- Lean into open-source models that spread fast and attract developers globally, including in the Global South. [4]

- Build infrastructure aggressively—China’s industrial capacity helps them deploy faster at scale. [6]

- Invest heavily in domestic chip production to reduce dependency, even though it’s still behind at the leading edge. [4] [6]

Comparison: who’s “winning” depends on what you mean

Look, I’ll be honest… “who’s winning the AI race” is one of those questions that sounds smart but usually turns into a food fight.

Here’s a cleaner way to think about it:

The Bottom Line

The bottom line is… the US has the edge in frontier chips and many top-end models, but China’s strength is turning AI into infrastructure + products fast. That’s why this feels less like a finish line and more like two flywheels spinning in different ways. [4] [6]

Caption: Chips → training → deployment. That pipeline is the whole game.

FAQ: the questions everyone’s quietly asking

1) Are US export controls on Nvidia chips still a big deal in 2026?

Yep. Even with some easing in broader trade tensions, leading-edge restrictions still persist, and the back-and-forth tightening/loosening keeps uncertainty high. [4] [5] [6]

2) Does China’s chip gap automatically mean it loses?

No. China can be behind in frontier training capacity and still win massive market share in applied AI through deployment scale, manufacturing strength, and infrastructure buildout. [6]

3) Why do people say Taiwan is “irreplaceable” here?

Because advanced semiconductor manufacturing capacity—especially at the cutting edge—is concentrated there, and that output is foundational for everyone’s AI hardware ambitions. [8]

4) What’s the real risk with military AI?

Dual-use tech plus strategic distrust. If each side assumes the other will weaponize AI, both sides feel pressured to accelerate—classic prisoner’s dilemma vibes. [1]

Pro Tips (if you’re building a business, not a foreign policy)

- De-risk your GPU plan: assume availability and rules can change mid-quarter. Have a second option.

- Design for portability: keep model code and infra as cloud-agnostic as you can (containers, IaC, standard runtimes).

- Use “good enough” models on purpose: don’t burn H100-class compute on jobs a smaller model can handle.

- Track policy like you track pricing: export controls can hit your roadmap as hard as a 30% cloud bill increase.

5 quick signs Nvidia export news will matter to you

If you’re wondering whether this is “above your pay grade,” run this quick check:

- You rely on a single cloud region for most of your training jobs.

- Your roadmap assumes GPU costs will drop “soon.”

- You’re expanding into markets where compliance rules differ wildly.

- You’re building on open-source models and need predictable inference capacity.

- Your customers are in regulated or defense-adjacent industries.

Caption: If you checked 2+ of these, congrats—you’re now in the chip soap opera.

Summary bullets: what to remember this week

- Nvidia GPUs aren’t just products anymore—they’re a policy lever in US–China competition. [4] [6]

- The US leads in frontier chips and many closed frontier models, but has energy/infra bottlenecks. [4] [6]

- China leads in diffusion and deployment at scale, often via open-source and fast infrastructure buildout. [4] [6]

- Expect volatility: restrictions can tighten or loosen, and that uncertainty is the real tax on builders. [4] [5]

Sources

- [1] Commentary on US–China military AI risks and prisoner’s dilemma dynamics (as referenced in provided research).

- [2] India AI Impact Summit 2026 and New Delhi AI Declaration participation (as referenced in provided research).

- [4] Analysis of early-2026 US–China AI competition: chips, models, diffusion, export controls, infrastructure (as referenced in provided research).

- [5] Reporting on eased US–China trade tensions and partial relaxation in semiconductor/rare earth controls (as referenced in provided research).

- [6] Reporting/analysis on Nvidia export curbs, Pax Silica framing, China’s infrastructure scale, and applied AI advantages (as referenced in provided research).

- [7] Estimates on China lagging ~6 months in frontier training due to chip constraints (as referenced in provided research).

- [8] Analysis noting Taiwan semiconductor production as irreplaceable for US AI hardware ambitions (as referenced in provided research).