AI-Generated Content Isn’t the Problem. Lazy Content Is.

AI-generated content isn’t ruining the internet—unverified, low-effort publishing is. Here’s a simple 5-step workflow to use AI like a power tool while keeping accuracy, trust, and your voice intact.

Is AI-generated content making the internet smarter… or just louder?

Here’s the thing… the tech isn’t the villain. The “publish 40 bland posts before lunch” mindset is. If you’ve ever read something that felt like it was written by a polite robot who’s never met a human, you already know what I mean.

The real problem: AI scales content, not trust

Let’s talk about what’s actually happening. AI makes it ridiculously easy to produce words. And the internet is responding exactly how you’d expect: more posts, more newsletters, more “thought leadership,” more everything.

But trust? Trust doesn’t scale like that. Trust is earned the slow way—by being accurate, clear, and useful.

And in 2026, accuracy is a little… tense. We’re watching major events unfold in real time with conflicting reports and political narratives flying everywhere. For example, as of March 1, 2026 there are disputed claims and denials around whether Iran’s Supreme Leader Ali Khamenei was killed following joint U.S.-Israeli strikes (Operation Epic Fury), with Israeli/U.S. leaders making claims and Iranian state media denying them, while intelligence verification is ongoing. That’s not a “content” problem—it’s a verification problem. But AI-written posts can accidentally amplify the mess if you don’t apply basic editorial judgment.

So if you’re using AI to generate content, you need to decide: are you building a library people rely on, or a landfill Google tolerates?

Solution: use AI like a power tool, not a replacement human

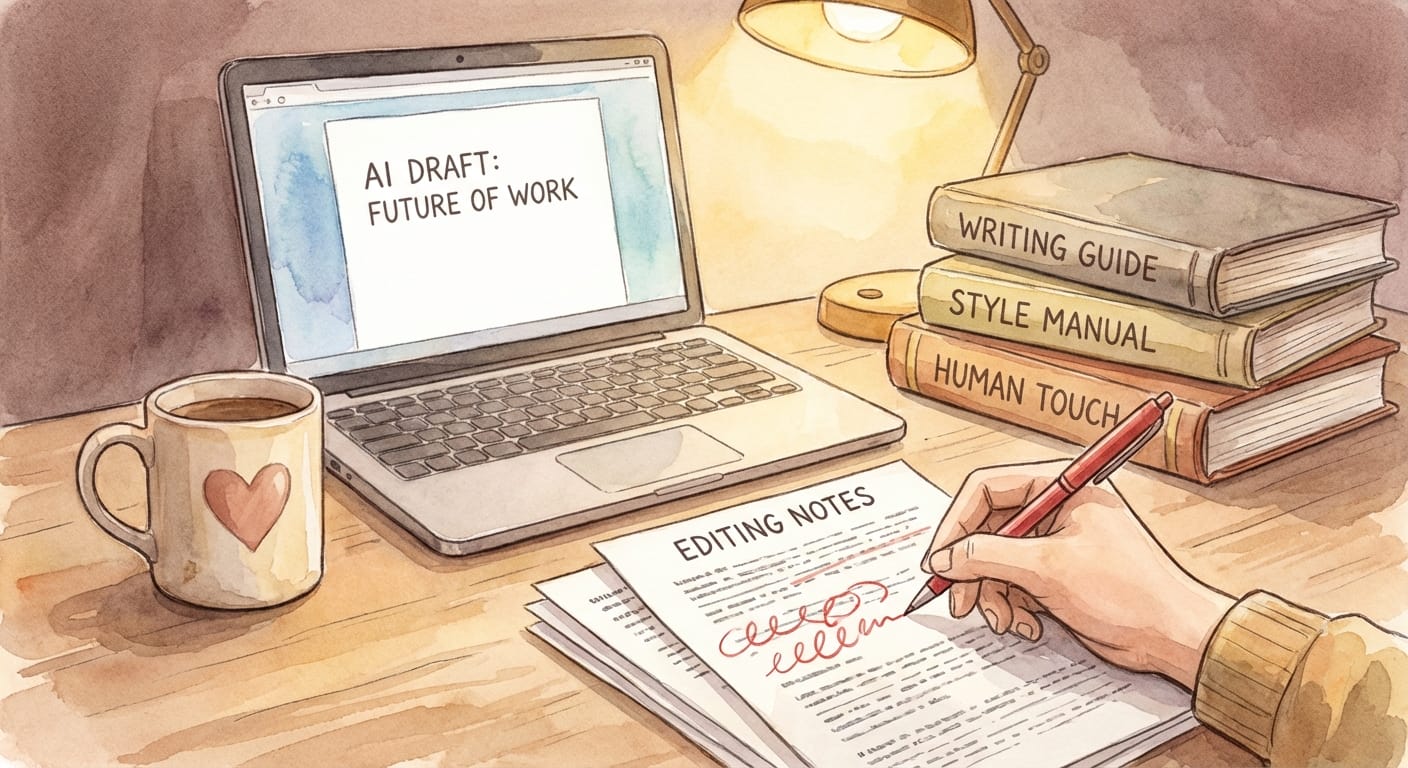

Look, I’ll be honest… AI is amazing at the parts of content creation that are repetitive and mechanical: outlining, summarizing, generating variations, rewriting for tone, translating, and turning “brain soup” into a first draft.

But the human parts still matter:

- Judgment: what’s true, what’s helpful, what’s irresponsible.

- Taste: what to cut, what to emphasize, what’s actually interesting.

- Accountability: putting your name on it.

Think of AI like a table saw. It’ll cut wood all day long. It won’t design the house, and it definitely won’t stop before it cuts off your thumb.

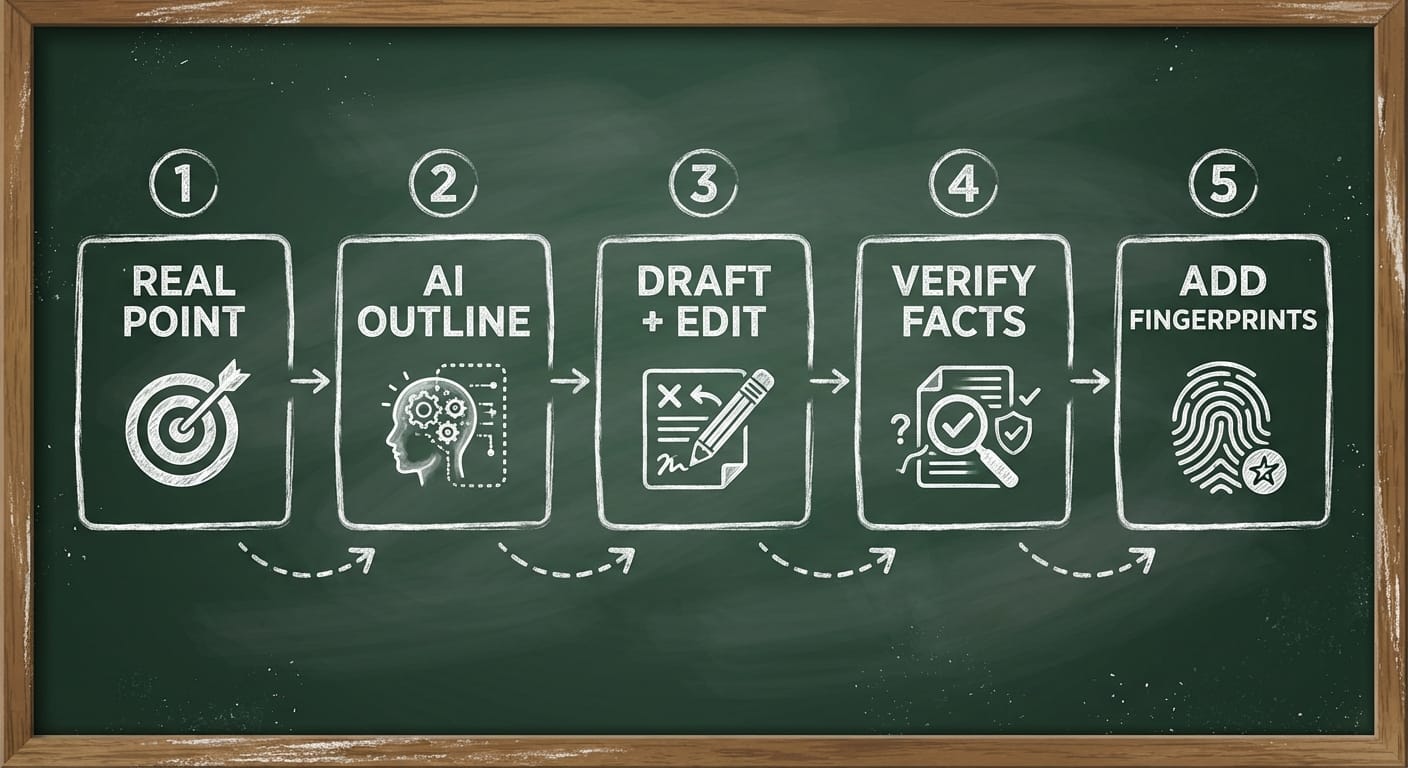

A practical 5-step workflow that doesn’t produce garbage

Here’s what most people miss: “AI content” isn’t one thing. The results depend on your process. Here’s my go-to workflow if you want content that’s fast and credible.

- Start with a real point Before you prompt anything, write one sentence: “After reading this, a smart person will be able to ____.” If you can’t fill that in, stop.

- Have AI outline, not author Ask for 2–3 outlines with different angles. Pick one and customize it. This keeps you in the driver’s seat.

- Draft fast, then switch modes Use AI to generate a rough draft quickly. Then stop prompting and start editing like a human: remove fluff, add examples, tighten claims.

- Verify anything that smells like a “fact” If the post mentions numbers, dates, names, medical/legal advice, or breaking news—verify it. Add citations. If you can’t verify it, say so.

- Add your fingerprints Insert an opinion, a lesson learned, a mistake you made, a real screenshot, a mini case study, a template—something that couldn’t have come from a generic model.

Pro Tips Box: prompts that actually help

- For outlines: “Give me 3 outlines with different viewpoints (beginner, skeptic, practitioner).”

- For clarity: “Rewrite at an 8th-grade reading level without losing technical accuracy.”

- For usefulness: “Add a checklist and 3 common mistakes people make.”

- For tone: “Make it sound like a candid founder explaining this to a friend.”

Common mistakes (aka how AI content goes off the rails)

- Publishing unverified claims: AI will confidently summarize rumors. That’s how misinformation spreads. In fast-moving situations with disputed reporting (like today’s Iran escalation), you either cite reputable sources and note uncertainty, or you don’t publish.

- Over-optimizing for SEO: If your post reads like it was designed by a spreadsheet, humans bounce. And bounce is the most honest metric on earth.

- Forgetting the “why me?” factor: If anyone can generate the same post in 30 seconds, you don’t have an asset—you have commodity text.

Case study snippet: “We doubled output and lost conversions”

A founder friend (real scenario, details anonymized) used AI to crank out 30 blog posts in a month for a B2B SaaS. Traffic went up. Trials went down.

Why? The posts were technically “correct,” but they were generic. No product perspective, no strong recommendations, no screenshots, no war stories. They were like brochures for nobody.

They fixed it by publishing less and adding what AI can’t: decision-making frameworks, pricing comparisons, implementation gotchas, and a clear POV. Conversions recovered within a few weeks.

FAQ: the questions everyone’s thinking

1) Will Google punish AI-generated content?

Google’s guidance has consistently emphasized rewarding helpful content over how it’s produced. The risk isn’t “AI” as a label—it’s low-value pages at scale. (See Google Search guidance on AI-generated content.)

2) Should I disclose that I used AI?

My opinion: if AI materially shaped the content and your audience expects transparency, disclose it. Especially in anything sensitive (health, finance, breaking news). Trust is a long game.

3) What’s the safest way to use AI for newsy topics?

Use it to summarize confirmed reporting, include multiple reputable sources, and explicitly label uncertainty. If facts are disputed, say “reports conflict” and link to sources.

4) What content is AI worst at?

Original reporting, nuanced domain judgment, and anything requiring real-world verification. AI can write a paragraph about a strike or a policy change—it can’t prove it happened.

Sources (so we’re doing this like adults)

- Google Search Central: Guidance on AI-generated content

- Reuters (for ongoing international coverage and verification-driven reporting) — referenced as a standard for confirmation when claims conflict

- Breaking-news context referenced from the provided research brief on March 1, 2026: disputed reports around Operation Epic Fury, casualty figures, and retaliation claims (verification ongoing; partisan sources conflict)

Action challenge: try this today

Pick one piece of content you were going to “just have AI write.” Now do it with the 5-step workflow above—especially the verification step and the “add your fingerprints” step.

The bottom line is… AI makes writing cheaper. Your job is to make trust expensive.