AI-Generated Content: Useful, Dangerous, and Totally Inevitable

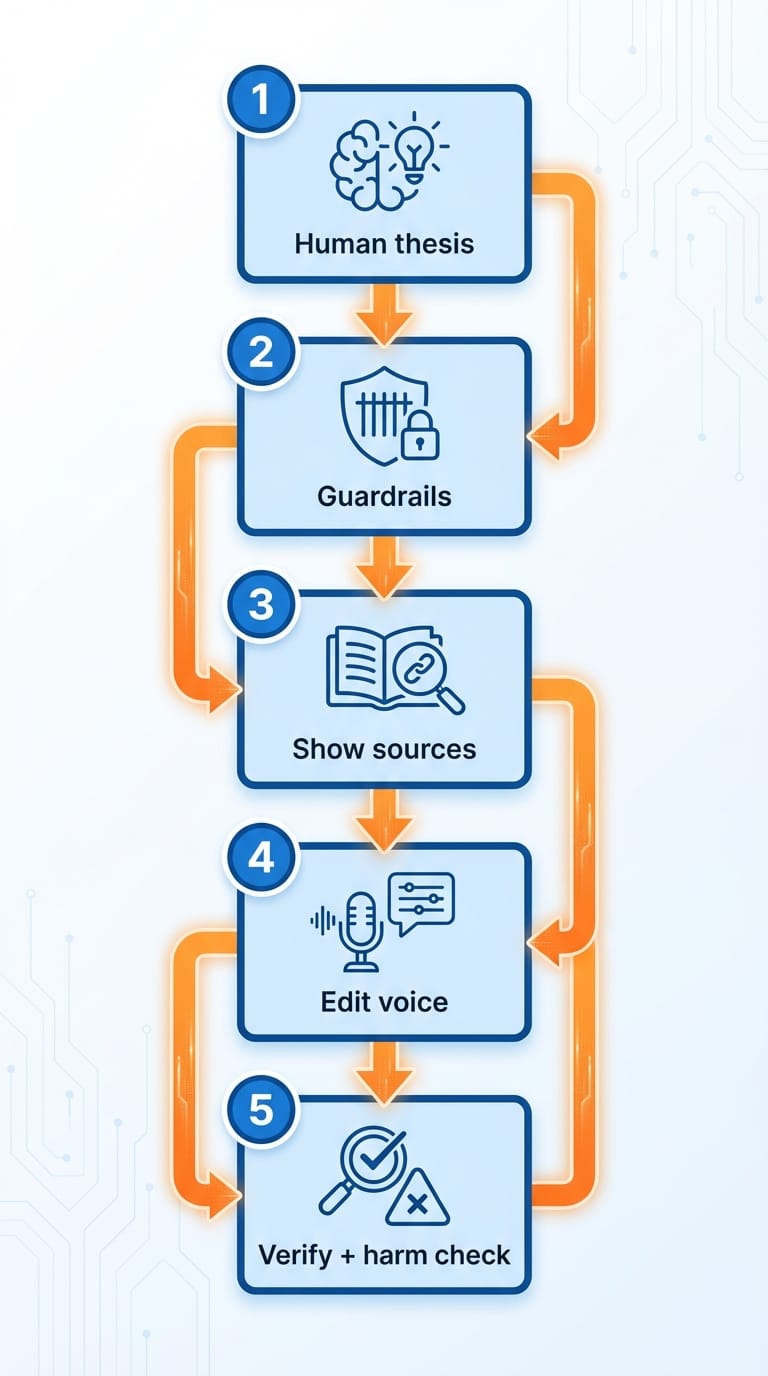

AI-generated content can scale your output fast—but without a human thesis, guardrails, and verification, it’ll scale your mistakes even faster. Here’s a practical 5-step workflow to get the upside without torching trust.

AI-generated content isn’t “fake.” It’s just unowned. And if you don’t put a real human in charge of it, it’ll happily crank out a thousand perfectly readable paragraphs that say… basically nothing.

Here’s the thing: the tech is good enough now that the hard part isn’t writing. The hard part is deciding what should be said, what’s true, what’s appropriate, and what’s worth shipping with your name on it.

The real problem: speed beats judgment

If you’re tech-savvy, you’ve probably seen the pattern:

- Someone gets access to a model.

- They discover they can publish 10× more content.

- They ship it without a strategy, without fact-checking, and without a voice.

- Six weeks later, they’re wondering why traffic is flat (or worse, why they’re in a PR mess).

And look, I’ll be honest… AI content isn’t the villain. Unsupervised AI content is. It’s like giving a teenager the keys to a Ferrari. The car isn’t evil. But you probably shouldn’t be surprised when something ends up in a ditch.

A quick reality check: the “current events” trap

Here’s what most people miss: AI is insanely good at writing plausible sentences, not necessarily true ones. That gap gets brutal when you’re writing about fast-moving news.

For example, the research notes attached to this project describe a rapidly evolving Middle East conflict, including claims of mass casualties, strikes on civilian sites, and leadership deaths, with details still disputed and changing by the day. In situations like that, “generate me a summary” can turn into “publish a confident-sounding paragraph that’s wrong in three different ways.” The human job is to slow the machine down.

If you’re publishing anything tied to breaking events, your workflow should assume uncertainty and bias by default. (We’ll talk about how in a second.)

Problem → Solution: Use AI like a production assistant, not an author

The best mental model I’ve found is this: AI is your production assistant. It drafts, it outlines, it rewrites, it formats. But it doesn’t own the thesis, the ethics, or the final call.

So what do you do instead of “let it rip”?

Step 1: Start with a human thesis (one sentence)

Before you prompt anything, write one sentence that answers: What do I actually believe? If you can’t do that, you’re about to generate word salad at scale.

Step 2: Give AI guardrails (voice, audience, and “don’t do” rules)

Add constraints like:

- Who it’s for (and what they already know)

- Your tone and examples

- Hard limits (no medical/legal advice, no speculation, no claims without sources)

Step 3: Make it show its work (sources, assumptions, unknowns)

When the topic touches news, science, finance, or anything reputationally spicy, require:

- Links to primary sources

- A list of what’s uncertain

- What it inferred vs. what it cited

Step 4: Edit for “you-ness” (voice + stakes)

This is where most AI content dies. If it doesn’t sound like a person with opinions and scar tissue, readers bounce. Add:

- Your actual examples

- Specific tradeoffs

- A clear recommendation

Step 5: Run a pre-publish checklist (accuracy + harm)

Especially for current events: confirm names, dates, casualty numbers, and attribution. If you can’t confirm it, label it as unverified or leave it out. The bottom line is… speed is optional; credibility is not.

Pro Tips Box: what I do in real life

- Write the intro myself. It forces clarity and sets tone.

- Use AI for structure. Outlines, section headers, and transitions are its happy place.

- Keep a “house style” prompt. A reusable prompt that includes my do’s/don’ts.

- Require citations for factual claims. No link? No claim.

Common mistakes (a.k.a. how teams blow up their own trust)

- Mistake #1: Publishing AI summaries of breaking news.If the story is changing hourly, your content will age like milk. Use AI for internal briefings, not public certainty.

- Mistake #2: Confusing “sounds legit” with “is legit.”AI will confidently fill gaps. That’s not intelligence; it’s autocomplete with swagger.

- Mistake #3: Letting AI write in a generic corporate voice.Congrats, you just made content that competes with everyone else’s content—and loses.

- Mistake #4: No disclosure or policy.If AI is part of your process, define how. Readers don’t like surprises.

FAQ: the questions people actually ask

Is AI-generated content bad for SEO?

Not automatically. Google’s guidance focuses on helpfulness and quality, not whether a human typed every word. But low-value scaled content is a known risk. (Translation: don’t spam.)

Should I disclose that I used AI?

I’m pro-disclosure when AI materially contributed to the output, especially in journalism-ish or educational content. At minimum, have an internal policy so your team is consistent.

Can I trust AI for facts?

You can trust it to generate a starting point. You cannot trust it to be your source of truth. For anything important, verify with primary sources.

What’s the safest use of AI content today?

Repurposing your own material: turning podcasts into posts, notes into outlines, and drafts into variations—stuff where you already own the truth.

Sources (because we’re not guessing)

- Google Search Central: guidance on AI-generated content and quality systems (helpful content, spam policies): developers.google.com

- Google Search Central Blog: “Google Search’s guidance about AI-generated content”: developers.google.com

- NIST AI Risk Management Framework (governance, risk, and trust considerations): nist.gov

Action challenge

Pick one piece of content you publish repeatedly (a blog post, a newsletter, release notes). Build the 5-step workflow above into a template today. Then run your next draft through it and see what changes.

Because AI isn’t replacing your writing. It’s replacing your excuses for not having a point.